Best Workstations for LLM Inference

96 AI workstations optimized for llm inference from 17 vendors. Updated June 2026.

96 workstations are tagged for llm inference, with prices ranging from $39 to $35,114. Hardware designed for serving large language models in production with low latency.

Options available from Origin PC, Thinkmate, Silicon Mechanics, and Boxx, covering entry-level to enterprise configurations.

Key Capabilities

- Optimized for throughput

- Low-latency response times

- Efficient batch processing

- Production-ready reliability

Top LLM Inference Workstations

All 96 LLM Inference Workstations

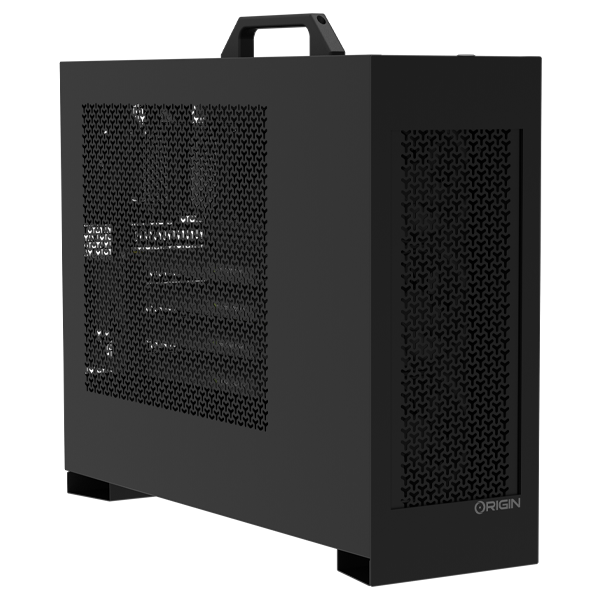

L-Class V2 Best

Origin PC • Workstations

Llm InferenceData Analytics

$35,114

L-Class V2 Better

Origin PC • Workstations

Llm InferenceData Analytics

$27,598

SuperWorkstation741GE-TNRT

Thinkmate • Workstations

Generative AiLlm TrainingComputer Vision

$26,714

Super Workstation741GE-TNRT

Silicon Mechanics • Workstations

Llm InferenceData Analytics

$26,196

L-Class V2 Good

Origin PC • Workstations

Llm InferenceData Analytics

$21,890

APEXX T4

Boxx • Workstations

Computer VisionLlm Inference

$19,881

Super Workstation741A-T

Silicon Mechanics • Workstations

Llm InferenceData Analytics

$19,718

APEXX W4

Boxx • Workstations

HpcLlm TrainingLlm Inference

$19,454

Super Workstation751A-I

Silicon Mechanics • Workstations

Llm InferenceData Analytics

$19,414

VSXR5 760S4

Thinkmate • Workstations

Generative AiLlm TrainingComputer Vision

$19,101

SuperWorkstation741A-T

Thinkmate • Workstations

HpcLlm InferenceData Analytics

$18,998

SuperWorkstation751A-I

Thinkmate • Workstations

Computer VisionLlm Inference

$18,694

Exxact Valence Workstation - 1x AMD Ryzen Threadripper Pro 9000/7000 WX-Series

Exxact • Workstations

Llm InferenceData Analytics

$16,914

ProMagix™ HD360A Dual Epyc Workstation

Velocity Micro • Workstations

Llm InferenceData Analytics

$16,499

Data Science WhisperStation - NVIDIA Data Science Workstation

Microway • Workstations

Llm InferenceData Analytics

$15,000

AI STATION PRO MAX Intel Xeon W Tower Desktop PC

Steiger Dynamics • Workstations

Generative AiLlm TrainingLlm Inference

$14,781

SuperWorkstation551A-T

Thinkmate • Workstations

Computer VisionLlm InferenceGenerative Ai

$13,678

ProMagix™ HD360A Epyc Workstation

Velocity Micro • Workstations

Llm InferenceData Analytics

$13,549

Super Workstation551A-T

Silicon Mechanics • Workstations

Llm InferenceData Analytics

$13,430

BIZON X6000 G2 – Dual AMD EPYC 9004, 9005 Series CPUs – Scientific Research an Rendering Workstation PC – Up to 3 GPU, Up to 384 Cores CPU

Bizon • Workstations

Computer VisionLlm InferenceGenerative Ai

$13,064

APEXX T4

Boxx • Workstations

HpcLlm TrainingLlm Inference

$12,764

TT-LoudBox

Tenstorrent • Workstations

Computer VisionLlm Inference

$12,000

APEXX T3 AMD Threadripper workstation

Boxx • Workstations

Computer VisionLlm TrainingLlm Inference

$11,688

Super Workstation531R-I

Silicon Mechanics • Workstations

Llm InferenceData Analytics

$10,988

SuperWorkstation531R-I

Thinkmate • Workstations

Computer VisionLlm Inference

$10,576

Exxact Valence Workstation - 1x Intel Xeon W-2400 Series processor

Exxact • Workstations

Llm InferenceData Analytics

$10,032

TT-QuietBox™ 2 (Blackhole®)

Tenstorrent • Workstations

Llm InferenceLlm TrainingData Analytics

$9,999

SuperWorkstation531A-IL

Thinkmate • Workstations

Generative AiLlm TrainingComputer Vision

$8,916

AI STATION PRO MAX AMD Threadripper WRX90 Tower Desktop PC

Steiger Dynamics • Workstations

Generative AiLlm TrainingLlm Inference

$8,852

VSXR5 760W34

Thinkmate • Workstations

Generative AiLlm TrainingComputer Vision

$8,535

shroud Signature Edition

Maingear • Workstations

Computer VisionLlm InferenceLlm Fine Tuning

$8,299

BIZON X5500 G2 – AI Deep Learning & Data science Workstation PC, NVIDIA – AMD Threadripper Pro

Bizon • Workstations

Computer VisionLlm InferenceGenerative Ai

$7,847

SuperWorkstation531A-I

Thinkmate • Workstations

Computer VisionLlm InferenceHpc

$7,702

Super Workstation531A-I

Silicon Mechanics • Workstations

HpcLlm InferenceData Analytics

$7,556

M-Class V2 Best

Origin PC • Workstations

Computer VisionLlm InferenceGenerative Ai

$7,099

SuperWorkstation531AW-TC

Thinkmate • Workstations

Generative AiLlm TrainingComputer Vision

$6,858

Panorama XL Prebuilt (i9-14900KF, 96GB DDR5, RTX 5090, 4TB SSD)

Empowered PC • Workstations

Computer VisionLlm InferenceGenerative Ai

$6,840

Super Workstation532AW-C

Silicon Mechanics • Workstations

Computer VisionLlm InferenceGenerative Ai

$6,475

SuperWorkstation532AW-C

Thinkmate • Workstations

Computer VisionLlm InferenceGenerative Ai

$6,269

GeForce Extreme Creator Gaming PC

CyberpowerPC • Workstations

Computer VisionLlm InferenceGenerative Ai

$6,249

AI STATION PRO AMD Threadripper TRX50 Tower Desktop PC

Steiger Dynamics • Workstations

Generative AiLlm TrainingLlm Inference

$6,229

Custom Enthoo Tower Workstation (up to 4x RTX Pro 6000 96GB)

Empowered PC • Workstations

Generative AiLlm TrainingLlm Inference

$6,200

Super Workstation521R-T

Silicon Mechanics • Workstations

Llm InferenceData Analytics

$6,143

BIZON X4000 – AMD Threadripper 7960X 7970X 7980X Processors AI Workstation Computer

Bizon • Workstations

Computer VisionLlm InferenceGenerative Ai

$6,046

SuperWorkstation521R-T

Thinkmate • Workstations

Computer VisionLlm Inference

$5,937

Orion Advanced (Xeon-W) AI Workstation

Central Computer • Workstations

Computer VisionLlm Inference

$5,932

APEXX S4

Boxx • Workstations

Computer VisionLlm Inference

$5,797

M-Class V2 Good

Origin PC • Workstations

Llm InferenceData Analytics

$5,174

BIZON G3000 G2 – 2 GPU 4 GPU RTX 5090 AI Workstation PC

Bizon • Workstations

Computer VisionLlm InferenceGenerative Ai

$5,136

M-Class V2 Better

Origin PC • Workstations

Llm InferenceData Analytics

$5,085

Nvidia DGX Spark Founders Edition Grace Blackwell 20 core Arm10 Cortex-X925 + 10 Cortex-A725 128GB LPDDR5x 1000 AI TOPS DGX OS

Central Computer • Workstations

Llm InferenceData Analytics

$4,700

Gigabyte AI TOP ATOM DGX Spark NVIDIA GB10 Grace Blackwell 128GB RAM

Central Computer • Workstations

Computer VisionLlm Inference

$4,500

PNY Nvidia DGX Spark Grace Blackwell Architecture 20 core Arm10 Cortex-X925 + 10 Cortex-A725 128GB LPDDR5x 1000 AI TOPS DGX OS

Central Computer • Workstations

Llm InferenceData Analytics

$4,500

APEXX E3

Boxx • Workstations

Generative AiLlm InferenceComputer Vision

$4,399

Thelio Astra

System76 • Workstations

Llm InferenceData Analytics

$4,199

Panorama Prebuilt (Ultra 9 285K, 96GB DDR5, RTX 5080, 4TB SSD)

Empowered PC • Workstations

Computer VisionLlm InferenceGenerative Ai

$4,085

Early Memorial Day 9950X3D2

CyberpowerPC • Workstations

Computer VisionLlm InferenceGenerative Ai

$3,815

BIZON X3000 G2 – AMD Ryzen AI Workstation PC for local LLM – Up to 192 GB VRAM, 16 Cores

Bizon • Workstations

Computer VisionLlm InferenceGenerative Ai

$3,754

L-Class V2

Origin PC • Workstations

Generative AiLlm Inference

$3,710

Retro95

Maingear • Workstations

Computer VisionLlm InferenceLlm Fine Tuning

$3,699

Bizon V3000 G3 – Intel Core i9-14900K 24 Cores 14th Gen Workstation

Bizon • Workstations

Computer VisionLlm InferenceGenerative Ai

$3,668

M-Class V2

Origin PC • Workstations

Llm InferenceData Analytics

$3,600

Bizon V3000 G4 – Intel Core Ultra 9 285K GPU AI Workstation PC for local LLM

Bizon • Workstations

Computer VisionLlm InferenceGenerative Ai

$3,556

AMD A+A

Origin PC • Workstations

Generative AiLlm InferenceComputer Vision

$3,512

Infinity XLC Gaming PC

CyberpowerPC • Workstations

Computer VisionLlm InferenceGenerative Ai

$3,355

MILLENNIUM

Origin PC • Workstations

Generative AiLlm InferenceLlm Training

$3,245

Gaming PC Master 9500

CyberpowerPC • Workstations

Computer VisionLlm InferenceGenerative Ai

$3,145

NEURON

Origin PC • Workstations

Generative AiLlm InferenceComputer Vision

$3,137

AI STATION PRIME Intel Core Ultra Desktop PC

Steiger Dynamics • Workstations

Generative AiLlm InferenceComputer Vision

$3,034

AI STATION PRIME AMD Ryzen Desktop PC

Steiger Dynamics • Workstations

Generative AiLlm InferenceComputer Vision

$3,033

Neuron 4500X RTS 9010104

Origin PC • Workstations

Generative AiLlm InferenceComputer Vision

$2,999

Neuron 4500X RTS 9010107

Origin PC • Workstations

Generative AiLlm Inference

$2,899

Neuron 4500X RTS 9010106

Origin PC • Workstations

Generative AiLlm Inference

$2,899

Gaming PC Infinity 8800 Pro SE

CyberpowerPC • Workstations

Llm InferenceData Analytics

$2,849

SuperWorkstation531AD-I

Thinkmate • Workstations

Generative AiLlm InferenceComputer Vision

$2,812

S-Class

Origin PC • Workstations

Llm InferenceData Analytics

$2,725

ProMagix™ HD80 Ultra

Velocity Micro • Workstations

Llm InferenceData Analytics

$2,709

ProMagix™ HD80 Workstation

Velocity Micro • Workstations

Llm InferenceData Analytics

$2,699

Super Workstation531AD-I

Silicon Mechanics • Workstations

Generative AiLlm InferenceComputer Vision

$2,675

AMD Extreme Gaming PC Configurator

CyberpowerPC • Workstations

Llm InferenceData Analytics

$2,575

RDY Y70 B01

iBUYPOWER • Workstations

Llm InferenceData Analytics

$2,549

Infinity 8800 Pro Gaming PC

CyberpowerPC • Workstations

Llm InferenceData Analytics

$2,535

RDY Prebuilt Computers

iBUYPOWER • Workstations

Llm InferenceData Analytics

$2,499

RDY Prebuilt Computers

iBUYPOWER • Workstations

Llm InferenceData Analytics

$2,499

RDY Prebuilt Computers

iBUYPOWER • Workstations

Llm InferenceData Analytics

$2,499

RDY Prebuilt Computers

iBUYPOWER • Workstations

Llm InferenceData Analytics

$2,499

RDY Prebuilt Computers

iBUYPOWER • Workstations

Computer VisionLlm InferenceGenerative Ai

$2,499

GeForce Elite Gaming PC

CyberpowerPC • Workstations

Llm InferenceData Analytics

$2,379

AMD Elite Gaming PC Configurator

CyberpowerPC • Workstations

Llm InferenceData Analytics

$2,349

Prebuilt PC GML 99674

CyberpowerPC • Workstations

Llm InferenceData Analytics

$2,269

RDY Element 9 Pro R07

iBUYPOWER • Workstations

Llm InferenceData Analytics

$2,099

WhisperStation Quiet Workstation

Microway • Workstations

Llm InferenceData Analytics

$1,999

Prebuilt PC GXL 8271

CyberpowerPC • Workstations

Llm InferenceData Analytics

$1,799

Custom Sentinel AI HPC Workstation (up to 2x RTX Pro 6000 96GB)

Empowered PC • Workstations

Computer VisionLlm TrainingLlm Inference

$1,260

AIME G500

AIME • Workstations

Llm InferenceData Analytics

$124

ASUS Ascent GX10

AIME • Workstations

Llm InferenceData Analytics

$39